Posts filed under “Science & Nature”

TEMPORAL CUBISM AND THE VOYNICH MANUSCRIPT.

This Magazine has run more than one article on the Voynich Manuscript, and in particular about the cranks who have left comments on the Internet Archive scan of the manuscript explaining how they have figured it all out. Whether the fact that Dr. Boli enjoys mocking the cranks so much means that he is a crank himself is a question he will leave the cranks to debate.

Every so often someone stumbles across one of those articles and leaves a comment explaining the Voynich Manuscript, or explaining one of the previous explanations and why it is actually on the right track. It happened just recently with our article “The Voynich Manuscript: Now Even More Figured Out.” One of the many explanations of the manuscript we had quoted was this one:

It’s a little bit religious and astronomical, but it’s main subject is biology. There are numerous ancient books in eastern world that mixing religion and biology, also they mixed religion and astronomy. So my opinion is that the book is describing plant’s biology with many exemplifications and associating them with religion and astronomy, and totalizes them with the help of woman characters to understand them.

Our commenter wrote:

I actually think the fifth interpretation here, that the VM is at least in part a coded reference to “Eastern” (a term I generally dislike but is probably somewhat appropriate for the late medieval period the VM arose from) philosophy and religion, is not totally unreasonable. Diane O’Donovan at voynichrevisionist.com has argued similar things.

The site in the link, incidentally, seems to be remarkably free from obvious crankery, and even shows a considerable respect for scientific method and evidence, both of those observations making us think that perhaps Ms. O’Donovan would not have been as kind to the explanation quoted as our commenter was.

But what arrested Dr. Boli’s attention about the comment was the commenter’s email address, and the commenter is invited to explain it here. The address is the words “Time Cube,” plus enough added characters that no one could guess the rest from the information we have just given out. It is quite clear, however, that the address is meant to refer to the Time Cube theory; and we are now going to follow that rabbit into its hole, because the mere mention of the Time Cube caused Dr. Boli to wallow in nostalgia for the good old days of the early Internet.

The empire of crankdom is a blotchy and disorganized country, like the Holy Roman Empire, with its member states constantly at war with one another as well as with the nations outside the borders. Nevertheless, even in such a mess of an empire, there has to be one emperor; and until his still-lamented death eleven years ago, the emperor of all cranks was Gene Ray, whose site first appeared in 1997, and who at various times offered a thousand or ten thousand dollars to anyone who could prove his Time Cube theory wrong.

Many cranks have done the same. There is no proving them wrong, of course, on the usual grounds that proof against the conspiracy is proof of the conspiracy. The flat-earther knows that the earth is flat; every bit of scientific evidence to the contrary merely shows that the conspiracy to keep the truth from us is all-pervading and nearly omnipotent.

But there was a fundamental difference that set the Time Cube theory apart from most of the other crankeries. When you argue with a flat-earther, you can understand his assertions: the world, he says, has this shape, not that shape. The idea of a flat earth is comprehensible. Likewise, the man who believes that the world is secretly run by a cabal of reptiles from another planet may be right or wrong, but he is making an assertion that, in itself, can be understood and affirmed or denied. And so with all the other cranks: whether they believe that they have discovered the one root vegetable that cures all known diseases or that the emperor Constantine wrote the New Testament on the back of an envelope, you can argue with them. You won’t win the argument; the crank will shake his head sadly at your naivety and pity you for being such a dupe. But when the crank makes an assertion, you can deny it, and explain why you deny it.

With Gene Ray and the Time Cube, you do not have that luxury. His theory is so incomprehensible that you cannot even deny it. You can only read or listen and say, “Huh?”

Like many cranks, Ray put everything he knew about everything on a single Web page, all centered, with long passages in all caps and many different sizes and colors of text. In time it grew to sequential pages, but all in the same non-format. The site was constantly under revision, but here is how the last version of it began when Mr. Ray died in 2015:

In 1884, meridian time personnel met in Washington to change Earth time. First words said was that only 1 day could be used on Earth to not change the 1 day bible. So they applied the 1 day and ignored the other 3 days. The bible time was wrong then and it proved wrong today. This a major lie has so much evil feed from it’s wrong. No man on Earth has no belly-button, it proves every believer on Earth a liar. Children will be blessed for Killing Of Educated Adults Who Ignore 4 Simultaneous Days Same Earth Rotation. Practicing Evil ONEness – Upon Earth Of Quadrants. Evil Adult Crime VS Youth. Supports Lie Of Integration. 1 Educated Are Most Dumb. Not 1 Human Except Dead 1. Man Is Paired, 2 Half 4 Self. 1 of God Is Only 1/4 Of God. Bible A Lie & Word Is Lies. Navel Connects 4 Corner 4s. God Is Born Of A Mother – She Left Belly B. Signature. Every Priest Has Ma Sign But Lies To Honor Queers. Belly B. Proves 4 Corners.

Well, perhaps that is not the best introduction to his theory, since it seems to assume that we already know what it is. But there are many places in the page where Ray does try to summarize the truth in terms that even stupid people like us can understand.

Hey stupid – are you too dumb to know there are 4 different simultaneous 24 hour days within a single rotation of Earth? Greenwich 1 day is a lie. 4 quadrants = 4 corners, and 4 different directions. Each Earth corner rotates own separate 24 hour day. Infinite days is stupidity.

He even gave us diagrams:

This diagram was redrawn from the fuzzy original on the Time Cube site by a heroic Wikimedia contributor with too much time on her hands.

And yet we persist in our culpable incomprehension!

The one thing that we can understand about Ray’s theory is that, whatever is evil in the world, the Jews and Blacks are behind it. The Jews began the 1-day lie; the Blacks are using it to oppress White Americans. It made Mr. Ray very angry.

And that is another thing that distinguished Ray from the ordinary run of cranks. Most cranks simply pity you for not seeing the truth. Ray hated you and told you in no uncertain terms that he was going to kill you when he got the chance.

Since I have informed you of Nature’s Harmonic Time Cube 4-Day Creation Principle, your stupidity is no longer the issue. For now, the issue is just how evil you are for ignoring Life’s Highest Order, and just how long the Time Cube will allow you to plunder Earth before inflicting hell upon you.

And one principle he taught to the young ones, over and over, for the whole time his site was on the Web (as we see from a 1998 capture of the site), was this cheering dogma:

Children are justified in killing adults “refusing” to know Nature’s 4 Day Time Cube Creation Principle.

Now, what has all this to do with the Voynich manuscript?

The only answer Dr. Boli could come up with was the principle of crank magnetism. “Crank magnetism,” says the site FlatEarth.ws, “is the tendency of ‘cranks’ to hold multiple irrational, unsupported, or ludicrous beliefs that are often unrelated.” The idea that essential knowledge is being deliberately suppressed opens one’s mind, in the same way that stepping on the foot pedal opens the rubbish bin in Dr. Boli’s office, and anything can be tossed in.

Incidentally, is it an exercise in crankery to build up a site with dozens of illustrated proofs that the earth is not flat, or is it simply a necessity to belabor the obvious in the age of social media? Here is your essay topic for the day. Meanwhile, Dr. Boli will get to work on his new pro-science site, “GRASS IS REAL,” which will refute the unfounded theory that the existence of the family Gramineae is a botanical hoax foisted upon us by members of the Bhutanese royal family as part of their centuries-long plot to deprive us of the knowledge of the carefree ground-covers used by our ancient ancestors. He was thinking of putting all the information on one page, centered, using multiple sizes and colors of text to emphasize salient points.

THE ROYAL ROAD TO ESPIONAGE.

You probably have to work up a cover story, order a fake ID from the Section of False Documents, put on a disguise, travel to the site, worm your way in somehow, and surreptitiously take several rolls of 16-millimeter film with your little Minox.

Wouldn’t it be more convenient if you could just sit in your comfortable government-issue desk chair and see, without going anywhere, exactly what was going on at those coordinates?

Of course it would be. And there’s wonderful news! Thanks to the science of Coordinate Remote Viewing—or CRV, as it is known to the cognoscenti—you can do exactly that. You can sit in your own chair, psychically tune in on those coordinates, and see and hear eight people slumped in their chairs snoring loudly while a ninth drones on about the substandard materials used in the resurfacing of the 1400 block of Beechwood Boulevard, because those are the coordinates of the City-County Building, and Pittsburgh City Council is in session.

How do you learn this wonderful remote viewing? It’s really quite simple. And because the CIA produced a working paper in 1985 outlining the training method, you can follow along at home and be a CRV professional in just a few easy steps.

We begin, as we did above, with just a set of coordinates.

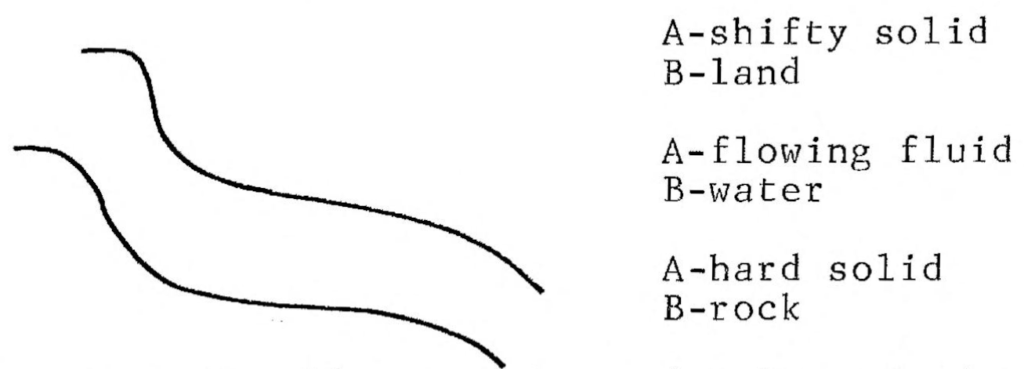

In Stage I the viewer is trained to provide a quick-reaction response to the reading of geographic coordinates by the interviewer. The coordinates are expressed in degrees, minutes, and seconds when possible. The response takes the form of an immediate, primitive “squiggle” on paper. This “squiggle” is known as an ideogram. The ideogram captures the overall feeling/motion of the gestalt of the site (e.g., fluid/wavy for water). This response is kinesthetic and not visual.

These ideograms are accompanied by (A) a “feeling/motion” and (B) an “automatic analytical response.” Or perhaps more than one of each, as in this sample illustration:

As you see, we’re already getting somewhere. Our site at these coordinates is either land or water or rock. Already we have eliminated the possibility of void.

Dr. Boli will not presume to teach the art of CRV when the CIA’s manual is both thorough and accessible. But the potential of the technique should be obvious. Your enemies cannot keep a secret if your agents can simply sit in their offices in Langley and see any arbitrary location in the world.

By Stage VI of the training, the viewer trainee is ready to be issued his modeling clay (or cardboard, or whatever he prefers to work with) and can start constructing a three-dimensional model of the site, using mostly the sense of touch.

That was the stage the research had reached by the time the working paper was produced. But the eye-opening Chapter 10, “Future Stages,” reveals the full brilliance of the scientific minds behind this program (even if they couldn’t spell “affect”).

STAGE X REMOTE ACTION (RA) Stage X would be mind-over-matter, also known as psychokinesis (PK). We have very little understanding of PK, but we do know it exists. If Stage IX is telepathic signals which effect people, it is logical the next stage would be RA signals which effect “things”.

STAGE XI ALTERING THE DIMENSIONALITY AT THE SITE This is the most difficult stage to understand. Time is considered another dimension, but there may be many more. Mathematically it is considered that there are infinite numbers of dimensions. Stage XI would be broken into at least two phases:

PHASE I would be altering time at the site. Time could be frozen, moved forward, or moved back. The implications of this are mind boggling. I believe this is the first stage where we could truly effect (alter) the future (as well as the past and the present).

PHASE II Maybe by the time we reach Stage XI we will understand enough about alternate dimensions to use this phase. I believe there would probably be an additional phase for each additional dimension we discover.

“Mind boggling” indeed! Yet, if you can believe it, the unpatriotic spoilsports in the Clinton administration canceled this mind-boggling program, simply because (according to Wikipedia) “evaluators concluded that remote viewers consistently failed to produce actionable intelligence information.” The super-secret training techniques for CRV were actually released to the public, so that our enemies now have as much information about it as we ever had.

Still, there is a silver lining. If our government gave up on the program, the release of the working paper at least gave private individuals a chance to pick up where the CIA’s research left off. Using the techniques he learned from the working paper, Dr. Boli decided to try an experiment. At the moment this working paper was being compiled in the CIA, what was going on in the KGB? Having looked up the coordinates of KGB headquarters, he used the most advanced techniques to project himself back to those coordinates in February of 1985, and he began to receive strong impressions almost immediately of a man in his early thirties convulsed in his chair with uncontrollable laughter. There was also a sound associated with the vision: something like poutine, which may refer to something the man had been eating, or may be related to the man in some other fashion.

IN SCIENCE NEWS.

ASK DR. BOLI.

A typical Mason, up to no good.

Dear Dr. Boli: I’ve been worrying about the global Masonic conspiracy lately, and the more I think about it, the more I worry. What can I do to protect myself from Masons if they should come after me? And it seems very likely that they will come after me, since I hold a position of some responsibility in the Grant Borough Department of Public Works. —Sincerely, A Quivering Wreck.

Dear Sir or Madam: The only way to keep yourself safe from Masons is to prepare beforehand. Fortunately it is not expensive to arm yourself against Masonry. Just as Kryptonite can deprive Superman of his superhuman abilities, at least until the writer and artist decide it is time for him to snap out of it, so a Mason can be deprived of his occult powers by Masonite. Go to your local stationer and stock up on clipboards and report covers and suchlike things, and you should be well prepared to fend off any attacks by roving Masons.

HOW TO DRAW CONCLUSIONS LIKE A REAL ARCHAEOLOGIST.

The earliest burials at the site are believed to be located in the conical mound and date back to about 250 BC. Many of the people buried in this mound had copper tools and ornaments buried with them.… People that were buried later did not have this type of artifacts buried with them and some burials do not contain artifacts. This tells us that over the 2,000 years that ancient people used the site, burial practices and ceremonies changed.

ASK DR. BOLI.

Dear Dr. Boli: A lot of us here experts have been very concerned about artificial intelligence. These AI companies are training their robot brains on our writing, which took us a whole lot of time to put together and get past peer review, and then they tell people to come to the robot for all their answers. Well, where does that leave us? So, like I said, we’re kind of concerned, and maybe a little hot under the collar about it. But we thought we’d ask you what you thought, because you’re kind of an expert, too, although we had a big argument about what kind of expert you are. —Sincerely, Milfort Quaid, Secretary and Treasurer, Middle American Society of These Here Experts.

Dear Sir: Speaking as the author of the Encyclopedia of Misinformation, Dr. Boli has no objection to AI bots training themselves on his writing.

DR. BOLI’S ALLEGORICAL BESTIARY.

No. 29. The Mosquito.

There are a number of deadly diseases and plagues that would be unable to survive and spread without the aid of the mosquito. Microorganisms by the trillions would perish, and whole species would become extinct.

Nor are microorganisms the only beings that profit from the existence of mosquitoes. On a summer evening, when the sun has gone down in fiery splendor, and billowy clouds are painted salmon and peach all across the sky, and the heady scents of evening blossoms hover in the cooling air, human beings might enter a state of complacent contentment and universal benevolence, were it not for the mosquitoes who irritate them and stir them up to real accomplishments, such as wars and massacres.

Mosquitoes are elegantly constructed creatures, nearly invisible in flight, and having a natural teleportative ability. You can slap at a mosquito, but the mosquito will not be there when the blow lands. The few mosquitoes that do allow themselves to be slapped have usually gorged themselves into suicidal depression.

Allegorically, the mosquito is the patron insect of car alarms, stuck kitchen drawers, construction zones, public-address systems, and other irritants that give civilization its character.

ASK DR. BOLI.

Dear Dr. Boli: I was watching some nutrition expert on YouTube, and I mean he must have been an expert or he wouldn’t have been on YouTube, but he left me confused. He was talking about how Americans’ health problems are caused by “hyperpalatable” foods, but as an example of a “hyperpalatable” food he mentioned Pop-Tarts. Insert question mark in parentheses. So I was thinking that maybe “hyperpalatable” doesn’t mean what I think it means, and I was wondering whether you could explain it. —Sincerely, A Big Fan of Food, but Not Really of Pop-Tarts.

Dear Sir or Madam: To understand what “hyperpalatable” means, you must keep in mind the Mencken dictum that no one ever went broke by underestimating the taste of the American people.

First of all, the word “hyperpalatable” is itself a sin against good taste. It is a middlebrow coinage at home among middlebrow YouTube pundits; it mashes Greek and Latin together, which seldom produces euphonious results. “Superpalatable” would be better and identical in meaning, combining a Latin prefix with a Latin root and suffix; but because “super” is readily understood even by uneducated English-speakers, the middlebrow prefers to say “hyper,” which sounds scientific because it is less usual.

But even if the YouTubist had used the proper term, we are still left with the necessity of explaining why he thought Pop-Tarts were more than usually delicious. The only explanation Dr. Boli can think of is that your YouTube personality was an American consumer.

It is true that an ordinary human being of ordinary tastes, confronted by a choice between Pop-Tarts from the convenience store and paczki from the local bakery, would not pick the Pop-Tarts as the more palatable of the two. But American commerce has bred a community of consumers who do not have ordinary tastes. Many of them have never set foot in a local bakery. Although Pop-Tarts are made by a process originally designed for dog food, they bring together dough and sugar, thus making a first step toward deliciousness.

In Dr. Boli’s opinion, the loose talk about “hyperpalatable” foods is missing what makes these foods ubiquitous and successful. Even the word “superpalatable” would be wrong, for obvious reasons. They are not superpalatable; but they are superconvenient—especially for the peddlers of snacks. It is difficult to make paczki that will survive distribution to local supermarkets for sale even the next day; but it is easy to make foil-packed dehydrated toaster pastries that will sit on a shelf for months or possibly years with no obvious chemical change. For the consumer who trusts only national brands and who buys snacks at a convenience store, the manufactured foods are the ones that are always available; and for many consumers they are the only foods they ever experience.

What is to be done? Dr. Boli is often suspicious of massive government interventions, but there is a war to be won here. The public welfare is at stake. The obvious solution is a government program to make sure that the average citizen is no more than two blocks’ walk from a bakery selling fresh pastries of the most delicious sorts. Will that make Americans healthier? Almost certainly not; in fact, they might die even younger. But they will die praising God, and thus their eternal welfare will be assured.

KILO, MEGA, KIBI, MIBI…

In fact, 12 million pixels is a number with odd mathematical properties in photography. It is very rare for a camera to make pictures with a number of pixels in either dimension that is divisible by a big round number like 1,000; usually the dimensions are something like 4,608 x 3,456. The reason has to do with the aspect ratios of rectangular pictures, which in most cameras are either 3 x 2 or 4 x 3. At an aspect ratio of 4 x 3, 4,000 by 3,000 pixels make up exactly 12 million pixels, which is intellectually satisfying; but you need some math to figure out what figures make up 10 million pixels or 16 million pixels at the same aspect ratio.

So looking for evidence that pixels were counted the way bytes are counted got us nowhere. But our search did lead us into an interesting demonstration of an Internet principle Dr. Boli has pointed out before, to which we may for the sake of convenience assign the name Boli’s Law of Internet Controversy: On the Internet, the victory goes to the most pedantic.

It used to be true that a kilobyte was 1,024 bytes, and a megabyte was 1,024 kilobytes. But the pedants have had their way with “kilobyte” and “megabyte,” insisting that they must be exact powers of ten. Therefore a unit of 1024 bytes is a kibibyte; and similarly, what you think of as a megabyte is a mibibyte. Wiktionary now classifies the usual meaning of “kilobyte” as a secondary “informal” definition; and we can see the moment the pedants invaded, because Wiktionary also preserves the discussions that surround its changes. Three years ago, User A said,

Switch def. 1 and 2 even if right (in the past?)

kilobyte (kB) for 1000 bytes might not be rare anymore? Is it standard in Operating systems now? Many computer hackers want to hang on to KB vs kB, but might be admitting defeat and accepting kB and use KiB (or KB, or Kilobyte with capital K, to distinguish).

Note, incidentally, the punctuation that indicates the use of the interrogative tone in discourse, which Dr. Boli keeps promising to write an essay about in his series on cultural neoteny, and he will probably get around to it eventually. To this indefinitely phrased statement, User B replied:

Absolutely. The binary definition of 1024 is officially deprecated, and not only should the numbers be switched it should be made clear that the binary definition is obsolete.

Now, here is an interesting glimpse into the mind of the pedant. Read that sentence again, and then ask yourself: What office has the authority to deprecate, officially, a common noun in English?

There is an answer to that question in many other languages. If we were speaking French, we might be able to refer to an official ruling of the French Academy on the meaning of “kilobyte.” But English never developed such an authority. We may trace the reason back to Samuel Johnson.

The preface to Johnson’s dictionary is a work that truly deserves to be called seminal, because it sowed the seeds for all the lexicographical thoughts that have sprouted in English since Johnson’s time. It is also one of the finest specimens of English prose ever written.

In this preface, Dr. Johnson explains that he had thought he might set the rules for correct English for all time. But then… Well, let us hear it from the Doctor himself:

Those who have been persuaded to think well of my design, require that it should fix our language, and put a stop to those alterations which time and chance have hitherto been suffered to make in it without opposition. With this consequence I will confess that I flattered myself for a while; but now begin to fear that I have indulged expectation which neither reason nor experience can justify. When we see men grow old and die at a certain time one after another, from century to century, we laugh at the elixir that promises to prolong life to a thousand years; and with equal justice may the lexicographer be derided, who being able to produce no example of a nation that has preserved their words and phrases from mutability, shall imagine that his dictionary can embalm his language, and secure it from corruption and decay, that it is in his power to change sublunary nature, or clear the world at once from folly, vanity, and affectation.

This seems like obvious truth to English-speakers, because we have grown up in a world where Johnson’s opinion is accepted as dogma. Yet it is not dogma for other languages, as Johnson himself points out.

With this hope, however, academies have been instituted, to guard the avenues of their languages, to retain fugitives, and repulse intruders; but their vigilance and activity have hitherto been vain; sounds are too volatile and subtile for legal restraints; to enchain syllables, and to lash the wind, are equally the undertakings of pride, unwilling to measure its desires by its strength.

Johnson’s opinion that language cannot be legislated has become the dogma of professional lexicographers. Merriam-Webster defines kilobyte as “a unit of information equal to 1024 bytes,” and then adds, “also: one thousand bytes.” The American Heritage Dictionary has a very similar definition, with a long usage note at gigabyte explaining that the first meaning is more common in most contexts, but the other more common for certain branches of the industry.

None of this satisfies the pedant, however. The professional lexicographers are wrong. The pedant knows, and his authority is indisputable. It is official. The official authority usually turns out to be a high-school English teacher who taught pedantry along with English, but the pedant has absolute faith in the irrefutability of his knowledge. If you want to know how Sisyphus felt, try starting a discussion on the Wiktionary page for kilobyte, saying, “Hey, I don’t think you’re really right about…” You will not win, because you are not the most pedantic person on the Internet.

Well, then, what have we learned? Nothing about megapixels. As far as Dr. Boli has been able to determine, “megapixel” has always meant a million pixels, not a power of two, and not a little bit less than a million pixels, which is what we would require for 4,000 x 3,000 to make 12.1 megapixels. But we have learned, once again, that the only way to win an argument on the Internet is to be more pedantic than the opposition and never to admit the possibility of error. There was a time when Dr. Boli would have called himself pedantic, but the Internet has taught him that he is underqualified for pedantry.

THE MEGAPIXEL MYSTERY.

So why, Father Pitt asked, is it advertised as a 12.1-megapixel camera? He could not come up with any good answer to that question. Every definition of “megapixel” he found said that it was a million pixels, not a scant million, not a baker’s million, but a plain old honest-to-goodness million. Multiplying 4,000 by 3,000 gives us 12,000,000, according to the old-fashioned math Father Pitt learned.

Yet cameras with a resolution of 4,000 by 3,000 are usually advertised as 12.1-megapixel cameras. It seems to be the industry standard.

So Father Pitt came to us and asked his question, and of course we gave him the verbal equivalent of a shrug and asked why he thought we should know.

But then the question ate at us.

Well, here is a job for artificial intelligence! Surely the bots, having absorbed the wisdom of the entire Internet, would have come across exactly the answer we were looking for and could distill it into a few short paragraphs in the style of a junior-high-school essay.

So we asked Google’s pet bot, “Why is a resolution of 3000 by 4000 called 12.1 megapixels instead of 12.0 megapixels?”

The answer came quickly and included a numbered list. It began, “A 3000×4000 resolution is exactly 12,000,000 pixels (3000 x 4000), which rounds to 12 megapixels (MP), but…”

Hold on there, Googlebot! You say 12,000,000 pixels rounds to 12 megapixels, but by Dr. Boli’s calculation it is exactly 12 megapixels. This is the anomaly for which we sought an explanation. You are indulging in a petitio principii, or in plain English begging the question.

At any rate, the bot tells us some things that are of little use, the only possibly relevant observation being that sensors don’t necessarily have exactly the stated number of pixels, so there might be slightly more than 12,000,000 pixels in the sensor—which may be true, but not in any way useful if the images that come out are exactly 12,000,000 pixels.

“In summary,” the bot concludes, “your 3000×4000 image is exactly 12MP, but cameras often have sensors with slightly different dimensions or use marketing-friendly rounded numbers, so 12.1MP is just a slightly more detailed label for what’s essentially a 12-megapixel sensor/image.”

It sounds as though the bot has come up with a very polite way of saying, “Your image is exactly 12 megapixels, but marketers lie.”

However, it occurred to Dr. Boli that there are many professionals of different sorts among his readers, and perhaps some of them might know why a camera that produces images with 12,000,000 pixels is sold as a 12.1-megapixel camera. Is there any good answer? Or do we have to settle for “marketers lie” and sadly shake our heads at the state of the world we live in?